// Introduction

The Privacy Frontier in AI

My research proposal was selected for funding through the Thompson Rivers University (TRU) Undergraduate Research Experience Award Program (UREAP). This grant provides a significant opportunity to pursue a topic I am deeply passionate about: leveraging Artificial Intelligence — specifically Large Language Models — to enhance the student experience while rigorously prioritizing data privacy. You can learn more about the program on the official TRU UREAP Award page.

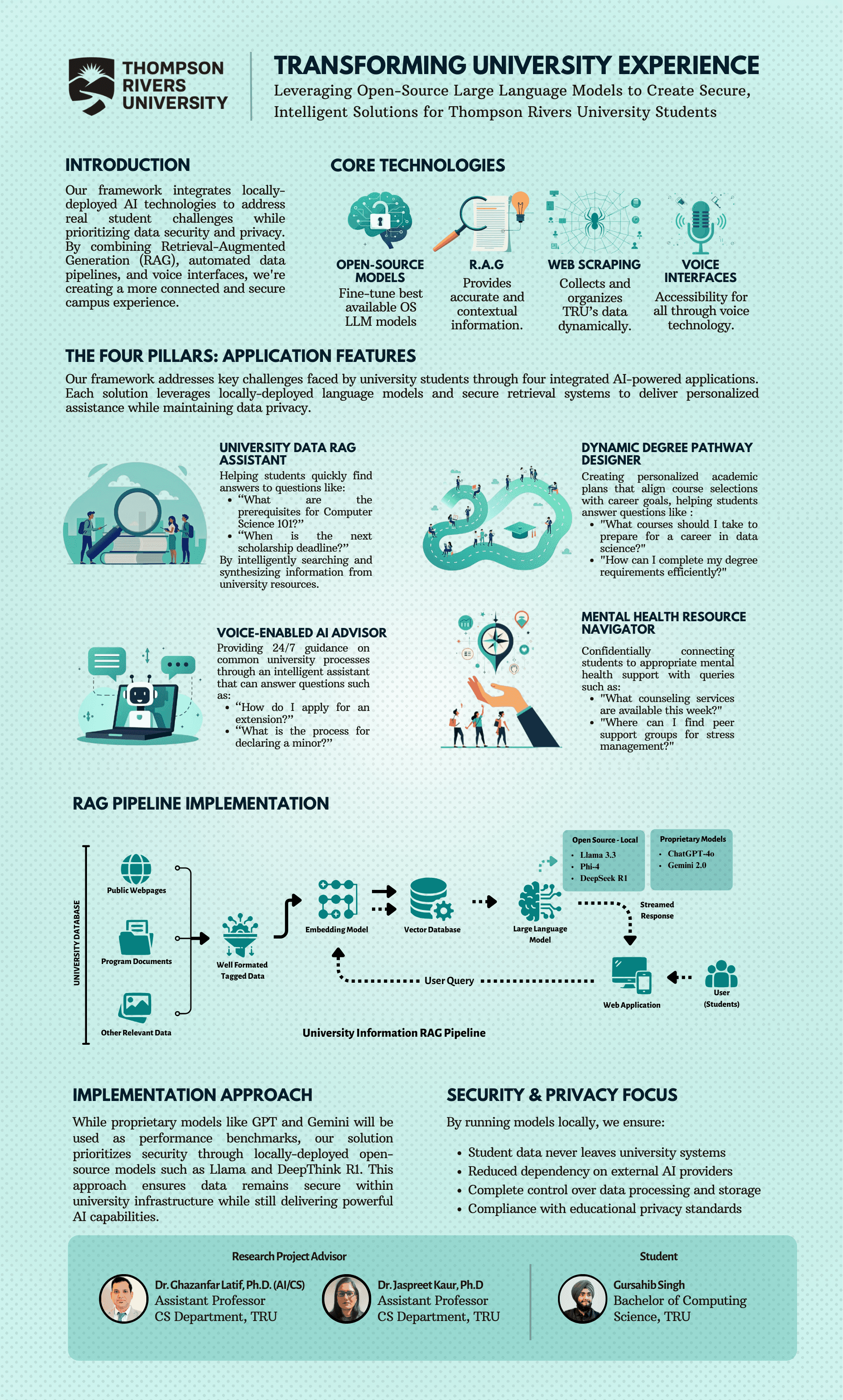

Navigating university resources, academic requirements, and support services can often be challenging. My project aims to develop a secure, locally-hosted AI assistant tailored to the needs of TRU students. By utilizing open-source LLMs, Retrieval-Augmented Generation (RAG), and intuitive interfaces including voice commands, the goal is to provide timely, accurate, and context-aware support without compromising sensitive student data. This research stems from my own experiences and a genuine desire to create tools that help students succeed — tools that are intelligent, ethical, and built with privacy at their core.

// Objectives

Strategic Goals

// Visualization

The Framework

↑ Visual overview of the research framework and implementation strategy

// Implementation

Technical Pillars

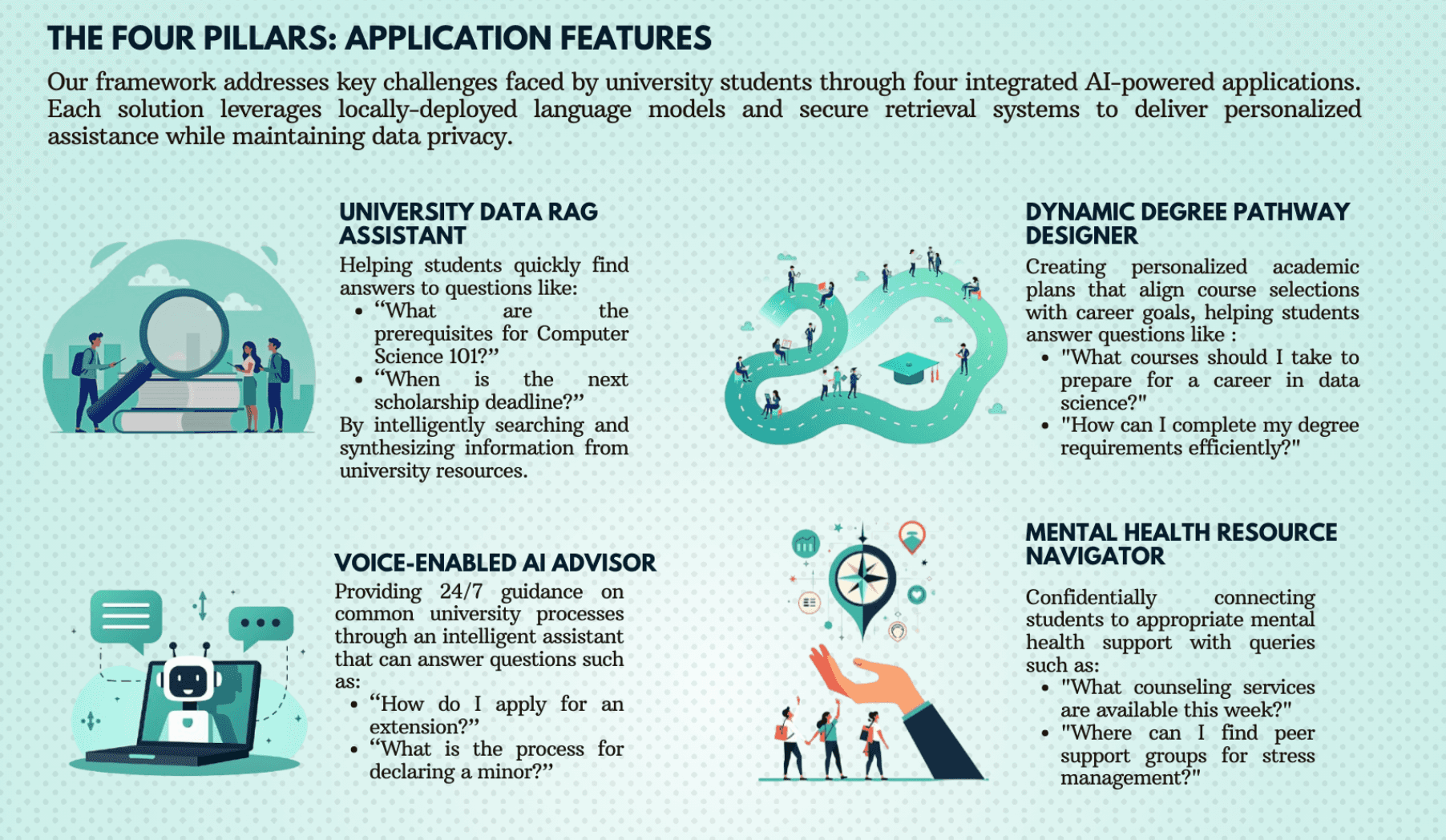

Our framework addresses key student challenges through four integrated AI-powered applications, each leveraging locally-deployed LLMs and secure retrieval systems for personalized, private assistance.

University Data RAG Assistant

Public TRU documents — course calendars, policies, FAQs — are chunked, converted into vector embeddings using models like Sentence-BERT, and stored in a local vector database such as ChromaDB. When a student submits a query, the system retrieves semantically relevant document chunks and feeds them to the locally hosted LLM, which synthesizes a grounded, accurate answer rooted in official TRU context.

Dynamic Degree Pathway Designer

Beyond simple Q&A, this tool provides personalized academic planning. By interfacing with program requirements and anonymized student progression data, the LLM suggests optimal course sequences, flags missing prerequisites, estimates time-to-completion, and aligns pathways with declared majors or career interests — incorporating constraint checking guided by deep understanding of course dependencies.

Voice-Enabled AI Advisor

To enhance accessibility, this pillar integrates speech-to-text technologies (Whisper or Whisper.cpp variants) with text-to-speech output (Piper, Coqui TTS). Students can ask questions conversationally and receive spoken responses. This module acts as a voice frontend to the RAG pipeline, processing audio input, querying the backend, and vocalizing the LLM's generated response in real time.

Mental Health Resource Navigator

Designed not to provide therapy, but to confidentially connect students with existing TRU mental health resources. Using keyword detection and sentiment analysis, the system identifies queries related to stress, anxiety, or counseling needs and directs students to official resources such as TRU Counseling Services, peer support groups, and emergency contacts — ensuring responses are safe, supportive, and strictly informational. Privacy is paramount.

// Engineering

System Architecture

The project relies heavily on open-source tools to maintain transparency and control. Key technologies include:

- LLMs: Primarily exploring Llama 3 (8B/70B), Phi-3 (Mini/Medium), and Mistral 7B — models that are performant enough to run on local hardware with GPU acceleration.

- RAG Framework: LangChain and LlamaIndex for orchestrating the retrieval and generation pipeline.

- Embedding Models: Sentence Transformers (e.g., all-MiniLM-L6-v2, bge-large-en) for producing dense text embeddings.

- Vector Database: ChromaDB or FAISS for efficient approximate nearest-neighbor similarity search.

- Voice Interface: Whisper (and Whisper.cpp for lower-latency inference) for speech-to-text; Piper and Coqui TTS for natural text-to-speech output.

- Backend / Frontend: Python with FastAPI or Flask for the inference server; React / Next.js for the web-based demonstrator interface.

Local Inference

Utilizing Llama 3 (8B/70B), Phi-3, and Mistral variants running on local hardware to ensure that no sensitive user queries ever leave the institutional environment.

RAG Orchestration

Leveraging LangChain, LlamaIndex, and ChromaDB for efficient similarity search and context-grounded generation, with embedding models tuned for academic and technical English.

Benchmarking Methodology

A critical component of the research involves rigorous benchmarking. Local models are compared against proprietary API-based counterparts (GPT-4o, Gemini Pro, Claude 3 Sonnet/Opus) across four key dimensions:

- Accuracy & Relevance: Using ROUGE/BLEU metrics, MMLU subsets, and human evaluation on TRU-specific question-answering datasets against baselines like GPT-4 and Claude 3.

- Latency: Measuring end-to-end response times on localized hardware for both voice and text interfaces.

- Resource Efficiency: Tracking VRAM, RAM, and CPU utilization during inference to understand the hardware requirements of responsible local deployment.

- Privacy Compliance: Continuous auditing of data flow during processing by local models to guarantee zero information leakage outside the institutional boundary.

// Mentorship

Research Support

I am profoundly grateful to my faculty supervisors, Dr. Ghazanfar Latif and Dr. Jaspreet Kaur, for their invaluable mentorship. Their expertise and encouragement have guided both the technical direction of this project and the broader research philosophy behind it. They fostered an environment in which I felt empowered to pursue work in an area I genuinely care about — improving the student journey through thoughtful, ethical technology. This project addresses challenges I have personally observed and experienced, and their trust in allowing me to tackle them is deeply appreciated.

I also extend my sincere thanks to the UREAP committee at Thompson Rivers University for recognizing the potential of this project and providing the operating grant that makes this research possible.

// Roadmap

Summer 2025

The UREAP funding marks the beginning of an exciting research phase. The immediate next steps involve setting up the development environment, refining the data ingestion pipeline for TRU documents, and conducting initial benchmarks of selected open-source LLMs against their proprietary counterparts. The work described here constitutes the primary research focus for Summer 2025.

Refine & Fine-tune Models

Systematically benchmark local LLMs and apply Parameter-Efficient Fine-Tuning methods such as LoRA using curated TRU-specific datasets — including question-answer pairs derived from official FAQs — to optimize model performance on university-related tasks.

Prototype & User Feedback

Develop functional prototypes for each of the four pillars and conduct usability testing with a small group of TRU students and staff. Following ethics protocols, feedback will be used to iterate on design, functionality, and response accuracy.

Strengthen Privacy & Security

Continuously audit and enhance data privacy measures. Implement robust access controls and data handling protocols. Explore advanced techniques such as differential privacy or homomorphic encryption where sensitive data processing is required.

Disseminate Findings

Document the research process, benchmarking results, and challenges encountered. Plan to present findings at student research conferences, publish results where appropriate, and release code components or evaluation datasets to benefit the wider educational technology community.

The ultimate impact of this project could be a replicable blueprint for institutions seeking to implement privacy-preserving AI student support systems. By demonstrating the viability of local, open-source solutions, this research aims to empower universities to leverage AI responsibly — proving that institutional care for student privacy and the power of modern language models are not mutually exclusive.

// Conclusion

Looking Forward

Embarking on this UREAP project is an extraordinary learning opportunity. I am eager to contribute to the development of AI tools that are not only intelligent but also ethical and secure — directly benefiting the TRU community in Kamloops. The research detailed here is my primary focus for Summer 2025, and I look forward to sharing progress and insights as the work unfolds.

I am always open to discussing ideas and learning from others in this field. If you have thoughts, insights, or suggestions regarding this project, please feel free to reach out. You can connect with me on LinkedIn, send me an email, or visit my contact page. Thank you again to the UREAP program and my supervisors for their trust and support.