// Overview

Breakthrough in accessible robotics

Project AURA represents a breakthrough in accessible robotics. Developed during the Internet of Things course at Thompson Rivers University, our team created a solution that bridges the gap between industrial-grade automation and human safety.

By replacing manual labor in "dull, dirty, and dangerous" environments with a vision-controlled robotic arm, AURA aims to protect workers while maintaining high precision and efficiency.

Its mission became clear through a simple factory-floor scenario: a worker repeatedly reaching into a dangerous machine such as a hydraulic press, where one moment of fatigue could become life-changing. That human risk is what led us to define the problem as the Complexity Gap.

On one side are industrial robots that are safe but expensive and difficult to program. On the other is manual labor that remains affordable but exposes people to avoidable danger. AURA was designed as the bridge between those extremes.

↑ AURA robotic arm prototype in action

// The Complexity Gap

Closing the safety divide

The robotics industry faces a critical accessibility challenge: traditional industrial robots cost $100,000+ and require PhD-level expertise to program, while manual labor is high-risk and leads to burnout. AURA shatters these barriers by providing a sub-$100 prototype that requires no code to operate.

The Market Disconnect

Industrial Robots

- Cost: $100,000+

- Programming: Requires PhD-level expertise

- Accessibility: Only available to major corporations

- Deployment Time: Months

Manual Labor Reality

- Cost: Low per-unit labor

- Safety: High risk of injury

- Sustainability: High employee burnout

- Precision: Variable and inconsistent

AURA Solution

- Cost: <$100 prototype (<$30 at scale)

- Programming: No code needed

- Accessibility: Democratized robotics

- Deployment: Immediate

These dull, dirty, and dangerous jobs are some of the clearest opportunities for automation, yet the tools to automate them have historically been locked behind price and expertise barriers. AURA was built to break both.

// Collaboration

Innovation Through Teamwork

Developed during the Fall 2025 Internet of Things course at Thompson Rivers University, AURA was guided by the expertise of Dr. Anthony Aighobahi and built as a collaborative effort across all five team members.

Our shared goal was to prove that high-end automation should not remain a luxury reserved for the world's richest corporations. The project blended robotics, AI, hardware prototyping, and accessibility thinking into a single system. Every member brought unique expertise to create something truly transformative.

Core Team

- Gursahib Singh

- Deepansh Sharma

- Yassh Singh

- Noori Arora

- Vansh Sethi

// Interaction

The No-Code Paradigm

Show, Don't Tell

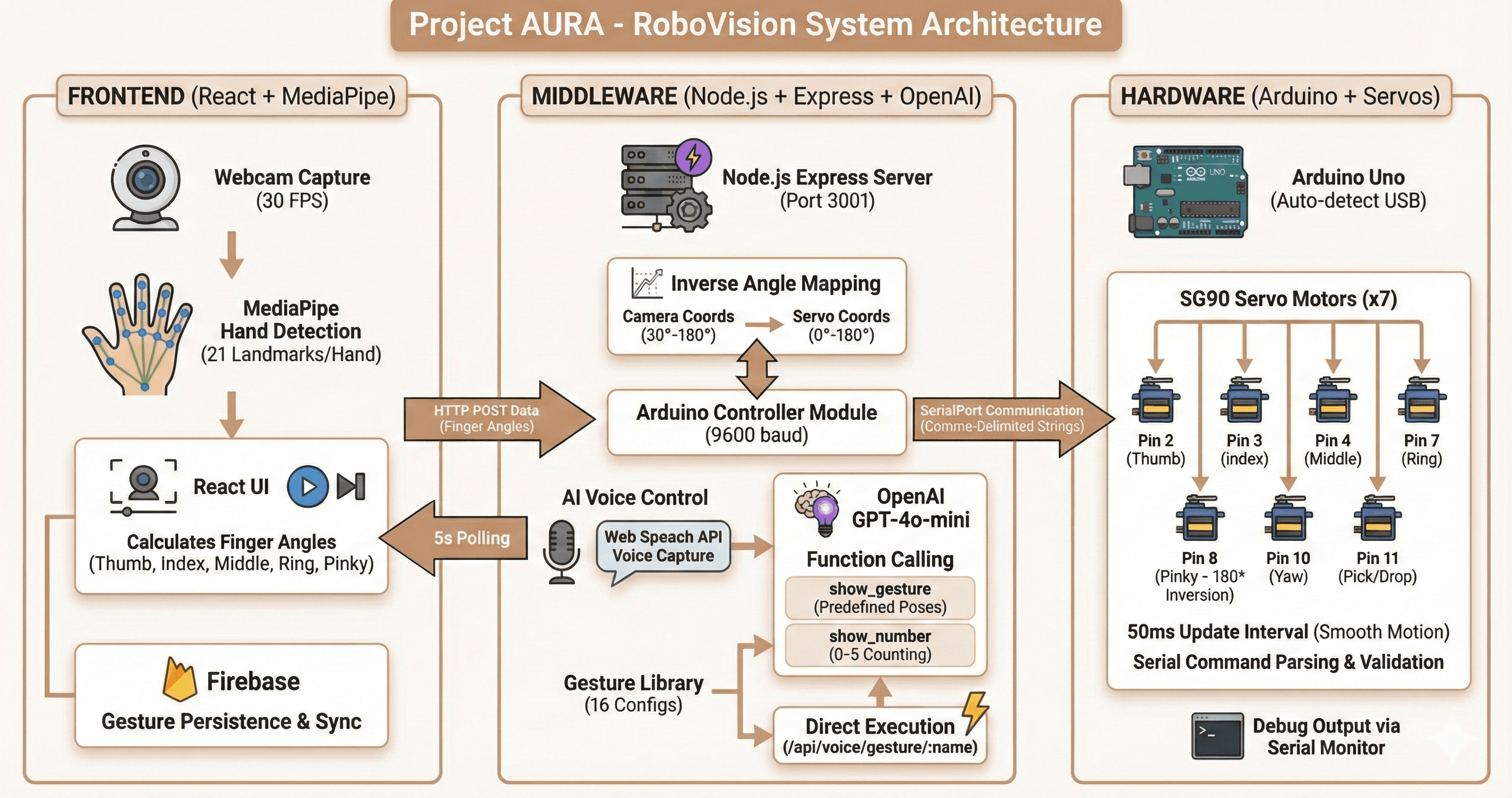

Workers capture their movements via webcam. Using Google MediaPipe and GPT-4o-mini, AURA processes intent and mirrors hand movements in real-time, eliminating the need for complex programming.

How the No-Code Loop Works

- 1.Visual Recognition: The system watches a worker's hand movements through a webcam instead of asking them to write code.

- 2.Intent Processing: MediaPipe extracts motion signals while GPT-4o-mini helps interpret those signals into actionable robotic intent.

- 3.Instant Mirroring: The robotic arm reproduces the demonstrated movement in real time with precision control.

- 4.Life-Saving Deployment: By replacing a human hand with AURA's 3D-printed grip in high-risk environments like hydraulic presses, the same interaction model saves lives while improving efficiency.

// Affordability

Democratizing Robotics

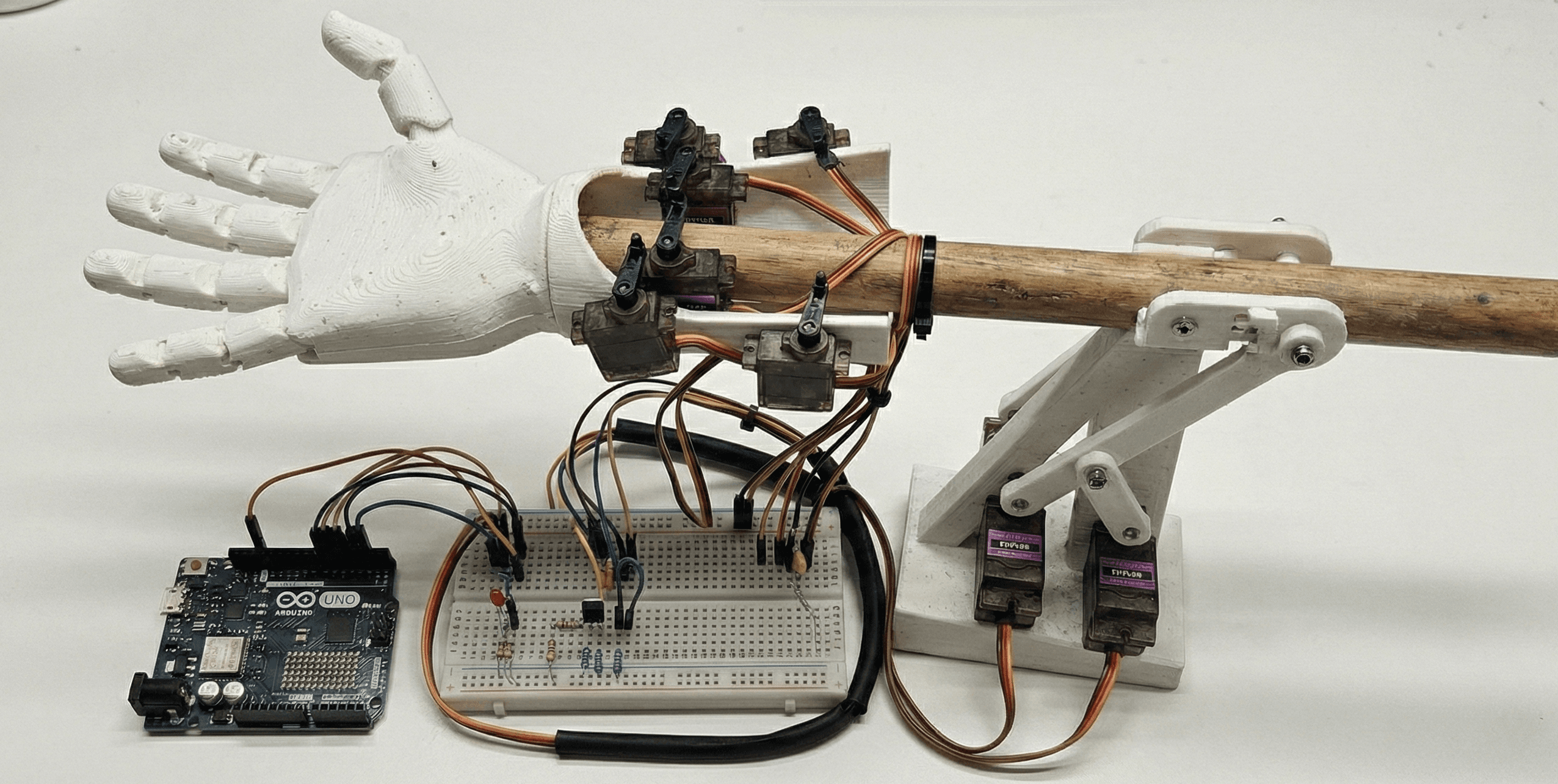

While traditional industrial arms cost as much as a luxury car, our prototype was built for under $100. At scale, the cost could drop to under $30, enabling total democratization of robotics for small workshops. Safety should never be a luxury — it should be universal.

Prototype Cost Drivers

- 3D-printed PLA structural parts kept fabrication inexpensive and fast to iterate.

- Arduino-based control, entry-level servos, and commodity webcam hardware reduced the electronics burden.

- Low-cost connectivity and modular assembly made the prototype realistic for educational and small-workshop settings.

Scale Production Path

- Injection molding replaces 3D-printed parts, cutting structural material costs by 60–70%.

- Bulk motor procurement delivers an additional 30–40% reduction on servo electronics.

- Integrated PCB manufacturing and broader supply-chain economies drive the total cost toward the sub-$30 target.

- That trajectory opens automation with industrial-grade safety standards to small family-owned workshops — not just major corporations.

The Vision

We are moving toward a future where a small family-owned workshop can have the same safety standards and precision as a massive automotive plant. Safety should never be a luxury — it should be universal. This is the total democratization of robotics.

// Academia

Research & Publication

AURA is a contribution to the scientific community. Our research paper, covering kinematic modeling, IoT telemetry via Firebase, and neural no-code pipelines, is currently in the process of being published with a reputed international conference.

Paper Coverage

- Kinematic modeling, inverse kinematics, and real-time control of the 6-DOF arm.

- Firebase-based IoT telemetry infrastructure for cloud logging and monitoring.

- Neural no-code programming flow built on computer vision and intent interpretation.

- Computer vision integration methodology — how MediaPipe pipelines are coupled to robotic actuation.

- Accessibility-first robotics design methodology for lower-cost deployment contexts.

The findings are intended to serve as a blueprint for the next generation of accessible robotics, inspiring further research into democratized automation. Full manuscript details will be shared publicly once publication milestones are complete.

// Engineering

Technical Stack

Key Technical Components

- MediaPipe Hand Tracking: Detects 21 hand landmarks in 3D space at ~95% accuracy and 30fps.

- Servo Motor Array: 6-degree-of-freedom arm with synchronized servo control across all joints.

- Inverse Kinematics Engine: Computes joint angles from target end-effector positions in real time.

- Firebase Integration: Cloud-based telemetry for remote monitoring, logging, and performance analytics.

End-to-End Data Flow

- 1. Capture: Webcam captures the user's hand gesture at 30fps.

- 2. Process: MediaPipe extracts 21 keypoints in 3D space.

- 3. Understand: GPT-4o-mini interprets motion intent and maps it to a robotic command.

- 4. Calculate: The IK engine resolves target joint angles from the command.

- 5. Execute: Arduino drives all servo motors in synchronized motion.

- 6. Log: Firebase records telemetry data for cloud-side monitoring and analysis.

// Capabilities

System Capabilities

User-Facing

- No-Code Operation: Workers teach the robot through demonstration instead of scripts or industrial programming languages.

- Real-Time Mirroring: Hand motion translates into immediate robotic movement — intuitive enough for operators with no robotics background.

- Safety Override: Emergency stop and real-time manual control options ensure operators remain in full command at all times.

- Accessibility-First Design: Hardware and interaction model are intentionally low-cost and easy to approach, including for operators with varying physical abilities.

Technical Capabilities

- Multi-Gesture Recognition: Supports point, grab, rotate, and custom gestures for varied task profiles.

- Adaptive Learning: The system improves gesture recognition accuracy with repeated use.

- IoT Telemetry: Firebase enables remote monitoring, logging, and later analysis of system behavior over time.

- Modular Architecture: Computer vision, intent parsing, control logic, and hardware layers are decoupled and can evolve independently.

// Roadmap

The Future of AURA

Near-Term (6–12 Months)

- Complete academic publication and peer review process.

- Refine kinematics model and expand industrial testing with real-world partners.

- Pursue partnership discussions with manufacturing facilities for prototype validation.

- Release an open-source version for educational environments once research milestones are complete.

Long-Term Vision

- Push manufacturing toward the sub-$30 target through injection molding for broader commercial deployment.

- Expand global accessibility, with a particular focus on developing countries where labor safety standards lag.

- Explore multi-arm coordination and swarm robotics for parallel task execution.

- Integrate AR/VR interfaces for advanced teleoperation and remote control scenarios.

The larger thesis behind AURA is simple: the Complexity Gap is closing. As AI becomes more accessible and manufacturing costs continue to drop, democratized automation will increasingly move from research labs into everyday industrial use.

We're the ones holding the door open.

Learn More

GitHub Repository: Full source code and documentation coming soon.

Research Paper: Complete technical details available upon publication.

Contact: Interested in collaboration or implementation? Get in touch!